An intro to machine learning and neural networks in simple words

How has machine learning brought us here?

We’ve been discussing AI in some detail, especially its healthcare role. AI has made the news recently in every corner and I am sure everyone has heard about the buzzwords “generative AI”, “LLMs”, and even plain old machine learning in the past 6 months. We will spend more time covering the latest news and applications of these AI algorithms but let’s spend some time covering the basics.

What is the basic concept behind machine learning?

Machine Learning is a subset of artificial intelligence, which builds intelligent computer systems to learn from databases and help with various applications like predicting something in the future.

Ultimately machine learning uses past and current data to learn from and give you a sense of what will happen or what you can expect. However, machine learning’s uses aren’t just limited to predictions. It can be used for automation, classification, clustering, etc: Almost every machine learning algorithm has the same process (learning on old data) and the same objective (making predictions or classifications on new data that is similar to old data).

How do machine learning Algorithms take data, train, and make predictions?

Before we delve into some of the specific algorithms that are part of machine learning, we need to understand a very important concept when it comes to machine learning: Supervised v Unsupervised datasets.

Supervised datasets provide data that scientists can already understand with labels, data that is labeled, or data that already comes with outcomes attached to it. These datasets are designed to train or “supervise” algorithms into classifying data or predicting outcomes accurately. Using labeled inputs and outputs, the models can measure their accuracy and learn over time.

An easy example I like to give is that of classifying fruits. Let’s say that you have already told the algorithm what an orange looks like, what an apple looks like, or what a pineapple looks like, if the algorithm sees the picture of an orange or something similar to an orange, it will be able to automatically tagged the fruit as an orange. You can imagine how useful this can be when we think about large datasets or when we need to classify something in real-time.

Unsupervised datasets provide data that scientists can use with machine learning algorithms to analyze and cluster unlabeled data sets. These algorithms may discover hidden patterns in data without actually needing someone to label the data.

A good example of unsupervised learning is to be able to group items. Remember those recommendation lists you see on Amazon? Amazon is using algorithms that group items together that people frequently buy. So when you are browsing a product or buying a particular product, Amazon will be able to throw a recommendation for you or even say that other people bought this product with the one you are currently looking at.

What are some standard machine learning algorithms and how are they used?

LINEAR REGRESSION

Linear Regression is one of the most common algorithms used in machine learning in that it is not so much even a machine learning concept, it’s ultimately a statistics concept. What linear regression does is it looks at a certain set of points, say you have numbers in a dataset that have an input and an output value. Linear Regression will draw a trendline through these points (insert linear regression graphic) and predict an outcome for input based on this trendline.

An example of this would be imagining that you want to know your body fat if you know your weight and some other measurement, given that body fat is correlated with physical measurements. Let’s say that you have a set of body fat values associated with some input measurement (weight, height, etc) (remember supervised learning!) and you can get a trendline from it (there are other ways to measure the strength of your model and to check if you have enough values). Linear regression will be able to predict a body fat value for a new input set of measurements.

LOGISTIC REGRESSION

Logistic Regression is another very important value that is used a lot in machine learning, especially by all types of corporations today. Logistic Regression is similar to Linear regression in that it takes a set of inputs but what it helps you predict is a binary output and this is all based on probabilities in the form of a log curve. So you could predict A or B, True or False with certain probabilities associated with it. Not only will you know what the model predicts but how strongly it predicts a certain outcome.

One of my favorite use cases for logistic regression is in medical imaging. You can use certain images or scans as inputs to predict if someone’s tumor is malignant or not. For instance, the size of the tumor, and the affected body area, can be fed into a Logistic Regression classifier to identify if the tumor is malignant or if it is benign.

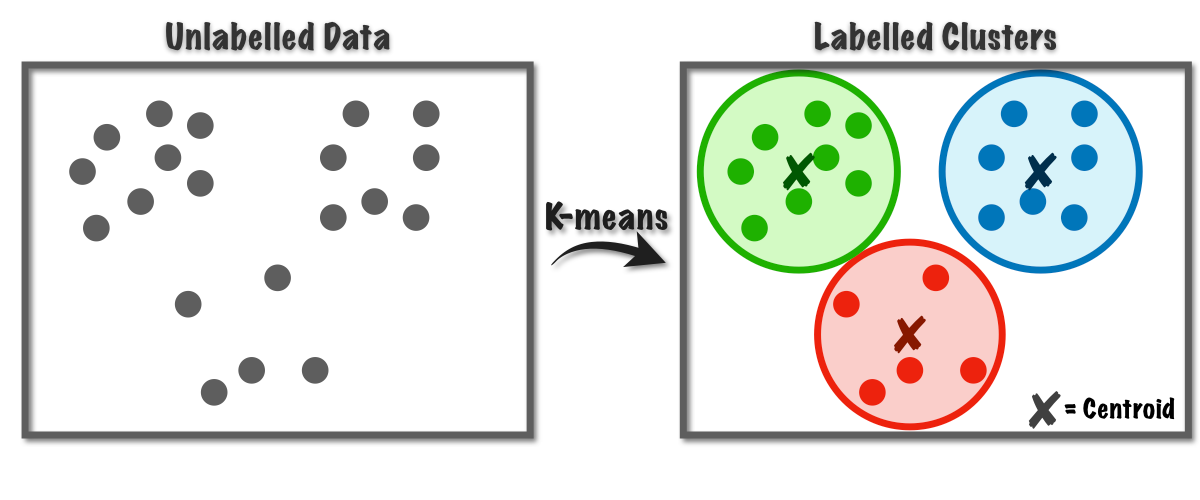

K-MEANS CLUSTERING

Another really powerful algorithm is K-means. What is K-means? K-means uses concepts such as average to calculate the numbers that share the same mean value in a particular group. The way this works is that let’s say you want to form 3 groups, so you will start with three numbers that are the average or the centroid of each group. Once you add new numbers to the data, each one gets attached to a new group, and the centroid of the group gets updated for the next number. So as you add more data, your groups start to become more distinct and the algorithm does better and better as you add more data. Now it could be that your data can’t be classified, so it is always important to have some sort of hypothesis on what you know about the raw data and the expectation of what types of relationships exist.

Let’s say that you are trying to put cities with data into groups of low crime, medium crime, and high crime based on statistics such as murder rate, assault rates, and population. K-means will calculate averages across these variables and start calculating means for each group based on each new city you add. After you add enough data, you should have very distinct groups. There are also ways to understand the number of optimal groups such as having two groups in the middle such as below-average and above-average crime rates.

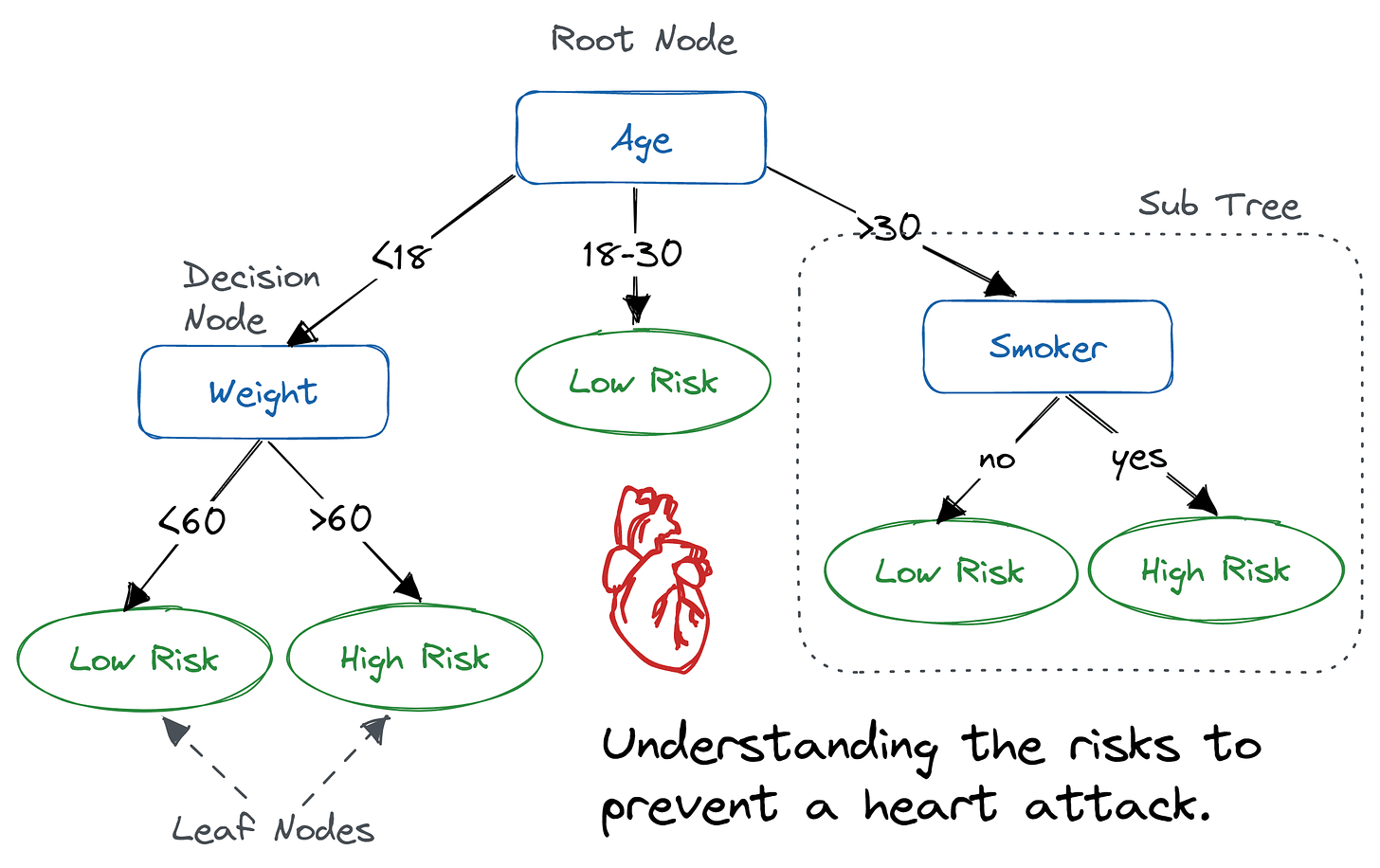

DECISION TREES & RANDOM FORESTS

Another essential set of machine learning algorithms is used for classification and regression. The main idea is to use the features of the data (for example attributes for risk of heart attack such as age, weight, and smoking) to come up with a classification or prediction.

A simple example from healthcare is a decision risk score for a heart attack based on patient attributes. Features such as age, height, weight, drinking status, smoking status, and body fat would represent nodes in a “decision tree” that can help you categorize a patient as high-risk or low-risk. A decision tree algorithm not only sets up the nodes but uses more important attributes (the ones that are the most powerful aka can explain the data the best - remember the linear regression graph above) to make decisions on and traverse the tree to arrive at a high risk or low risk classification.

NEURAL NETWORKS AND LARGE LANGUAGE MODELS

A neural network is a type of machine learning model inspired by the structure and functioning of the human brain's neural networks. Neural networks are used for various tasks such as pattern recognition, classification, regression, and decision-making based on data. Neural networks are also fundamental to deep learning. Deep learning is a subset of machine learning that involves training artificial neural networks with multiple hidden layers to learn and represent complex patterns and relationships within data. The term "deep" in deep learning refers to the use of multiple layers in neural networks, enabling them to learn hierarchical representations of data.

The basic building block of a neural network is a neuron, also called a node or unit. Neurons are organized into layers, and there are typically three types of layers:

Input Layer: This is the first layer, which receives the initial data or features as input.

Hidden Layers: These are the intermediate layers between the input and output layers. The hidden layers are responsible for learning patterns and features from the input data.

Output Layer: This is the final layer, which produces the model's output or prediction.

Each neuron in a layer is connected to every neuron in the subsequent layer through connections called weights. These weights determine the strength of the connections between neurons and play a crucial role in learning.

Now, we need to learn about two very important ways neural networks learn:

Forward Propagation: The input data is fed into the neural network, and the computation flows through the network from the input layer, through the hidden layers, and finally to the output layer. Each neuron performs a weighted sum of its inputs, passes it through an activation function, and produces an output.

Backpropagation: After obtaining the output, the neural network compares it to the expected output (ground truth) using a loss or cost function. The goal of the network is to minimize this loss. Backpropagation calculates the gradients of the loss function concerning the network's weights, allowing the network to update its weights in the opposite direction of the gradient. This process iteratively adjusts the weights to reduce the prediction error and improve the model's performance.

A large language model refers to a type of artificial intelligence model designed to understand, generate, and process human language. These models are typically based on deep learning techniques, specifically on neural networks with a large number of parameters and layers. Large language models have significantly more parameters than traditional language models, allowing them to capture complex patterns and relationships in language data.

The development of large language models has been a significant breakthrough in natural language processing (NLP) and has led to remarkable advancements in various language-related tasks such as language generation; question answering; and text completion, extraction, and prediction.

Prominent examples of large language models include:

GPT (Generative Pre-trained Transformer): Developed by OpenAI, GPT uses a Transformer architecture and has been released in various versions, including GPT-3, which has 175 billion parameters as of its release.

BERT (Bidirectional Encoder Representations from Transformers): Introduced by Google AI, BERT has revolutionized NLP by pre-training a bidirectional Transformer model on a large corpus of text.

Llama 2 (Generative Pre-trained Transformer): This is the model we talked about in an earlier post. Llama is a large language model that was released by Meta. The primary use of this model is for organizations that are serving generative use cases; hence, the pre-training that it comes with on large volumes of data all over the internet.

A very direct application of deep learning and large language models is to extract more valid information from unstructured healthcare data. Real-world data companies often find it hard to be confident about the validity of a cohort i.e. if a patient or set of patients has the target disease. Deep learning can go beyond claims and databases into handwritten notes and speech to provide higher trust in the data.

CONCLUSION

Some of the algorithms and examples that we talked about hopefully give you an idea of how important machine learning and neural networks are, and how they serve as the backbone of many of the applications we encounter today.